From Symptom to Reality in Modern History

GA 185

Lecture VII

1 November 1918, Dornach

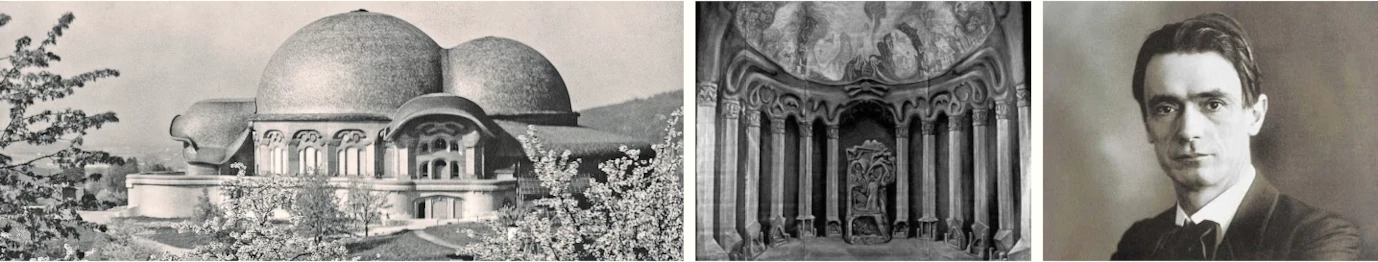

In the course of our enquiries during the next few days I should like to draw your attention to two things which seemingly bear little relation to each other. But when we have concluded our enquiries you will realize that they are closely connected. I should like in fact to touch upon certain matters which will provide points of view, symptomatic points d'appui concerning the development of religions in the course of the present fifth post-Atlantean epoch. And on the other hand, I would also like to show you in what respect the spiritual life that we wish to cultivate may be associated with the building which bears the name ‘Goetheanum.’

It seems to me that the decisions taken in such a case have a certain importance, especially at the present time. We are now at a stage in the evolution of mankind when the future holds unknown possibilities and when it is important to face courageously an uncertain future and when it is also important, from out of the deepest impulses, to take decisions to which one attaches a certain significance. The external reason for choosing the name ‘Goetheanum’ seems to be this: I expressed the opinion a short time ago in public lectures that, for my part, I should like the centre for the cultivation of the spiritual orientation that I envisage to be called for preference the Goetheanum. The name to be decided upon had already been discussed last year; and this year a few of our members decided to support the choice of the name ‘Goetheanum.’ As I said recently there are many reasons for this choice, reasons which I find difficult to express in words. Perhaps they will become clear to you if I start today from considerations similar to those which I dealt with here last Sunday, by creating a basis for the study of the history of religions which we will undertake in these lectures.

You know of course—and I would not touch upon personal matters if they were not connected with revelant issues, and also with matters concerning the Goetheanum—you know that my first literary activity is associated with the name of Goethe and that it was developed in a domain in which today, even for those who refuse to open their eyes, who prefer to remain asleep, the powerful catastrophic happenings of our time are adumbrated. My view of Goethe from the standpoint of spiritual science, and equally what I said recently in relation to The Philosophy of Freedom, are of course a personal matter; on the other hand, however, this personal factor is intimately linked with the march of events in recent decades. The origin of my The Philosophy of Freedom and of my Goethe publications is closely connected with the fact that, up to the end of the eighties I lived in Austria and then moved to Germany, first to Weimar and then to Berlin, a connection of course that is purely external. But when we reflect upon this external connection we are gradually led, in the light of the facts, if we apprehend the symptoms aright, to an understanding of the inner significance. From the historical sketches I have outlined you will have observed that I am obliged to apply to life what I call historical symptomatology, that I must comprehend history as well as individual human lives from out of their symptoms and manifestations because they are pointers to the real inner happenings. One must really have the will to look beyond external facts in order to arrive at their inner meaning.

Many people today would like to learn to develop super-sensible vision, but clairvoyance is difficult to achieve and the majority would prefer to spare themselves the effort. That is why it is often the case today that for those naturally endowed with clairvoyance there is a dichotomy between their external life and their clairvoyant faculty. Indeed, where this dichotomy exists super-sensible vision is of little value and is seldom able to transcend personal factors. Our epoch is an age of transition. Every epoch, of course, is an age of transition. It is simply a question of realizing what is transmitted. Something of importance is transmitted, something that touches man in his inmost being and is of vital importance for his inner life. If we examine objectively what the so-called educated public has pursued the world over in recent decades, we are left with a sorry picture—the picture of a humanity that is fast asleep. This is not intended as a criticism, nor as an invitation to pessimism, but as a stimulus to awaken in man those forces which will enable him to attain, at least provisionally, his most important goal, namely, to develop insight, real insight into things. Our present age must shed certain illusions and see things as they really are.

Do not begin by asking: what must I do, what must others do? For the majority of people today such questions are inopportune. The important question is: how do I gain insight into the present situation? When one has adequate insight, one will follow the right course. That which must be developed will assuredly be developed when we have the right insight or understanding. But this entails a change of outlook. Above all men must clearly recognize that external events are in reality simply symptoms of an inner process of evolution occurring in the field of the super-sensible, a process that embraces not only historical life, but also every individual, every one of us in the fullness of our being.

Let me quote1R. Hamerling (1830–89). Early work lyrical poems. Homunculus (1888) attacked the soullessness of the epoch. Philosophy opposed to monism and pessimism. by way of illustration. Today we are very proud that we can apply the law of causality in all kinds of fields; but this is a fatal illusion. Those who are familiar with Hamerling's life know how important for his whole inner development was the following circumstance. After acting for a short time as a ‘supply’ teacher in Graz (i.e. a kind of temporary post before one is appointed to a permanent position in a Gymnasium) he was transferred to Trieste. From there he was able to spend several holidays in Venice. When we recall the ten years which Hamerling spent on the Adriatic coast—he divided his time between teaching in Trieste and visiting Venice—we see how he was fired with ardent enthusiasm for all that the south could offer him, how he derived spiritual nourishment for his later poetry from his experiences there. The real Hamerling, the Hamerling we know, would have been a different person if he had not spent the ten years in question in Trieste with the opportunity for holidays in Venice!

Now supposing some thoroughly philistine professor is writing a biography of Hamerling and wanted to know how it was that Hamerling came to be transferred to Trieste precisely at this decisive moment in his life, and how a man without means, who was entirely dependent upon his salary, happened to be transferred to Trieste at this particular moment. I will give you the external explanation. Hamerling, as I have said, held at that time a temporary appointment (he was a supply teacher, as we say in Austria) at the Gymnasium2The Gymnasium prepared pupils for the Abitur and the University. Main subjects Greek and Latin; two foreign languages, usually French and English, included in the curriculum. in Graz. These supply teachers are anxious to find a permanent appointment, and since this is a matter for the authorities, the applicant for such a post has to send in his various qualifications—written on one side of the application form—enclosing testimonials, etcetera. The application is then forwarded to a higher authority who in turn forwards it to still higher authorities, etcetera, etcetera. There is no need to describe the procedure further. The headmaster of the Gymnasium in Graz where Hamerling worked as a temporary assistant, was the worthy Kaltenbrunner. Hamerling heard that there was a vacancy for a master in Budapest. At that time the Dual Monarchy did not exist and teachers could be transferred from Graz to Budapest and from Budapest to Graz. Hamerling applied for the post in Budapest and handed in his application, written in copper plate, together with the necessary testimonials to the headmaster, the worthy Kaltenbrunner, who placed it in a drawer and forgot all about it. Consequently the post in Budapest was given to another candidate. Hamerling was not appointed because Kaltenbrunner had forgotten to forward the application to the higher authorities, who, if they had not forgotten to do so, would have forwarded it to their immediate superiors and these in their turn to their superiors, etcetera, until it reached the minister, when it would have been referred back to the lower echelons and have passed down the bureaucratic ladder. Thus another candidate was appointed to the post in Budapest, and Hamerling spent the ten years which were decisive for his life, not in Budapest, but in Trieste, because sometime later a post feil vacant here to which he was appointed—and because, of course, the worthy Kaltenbrunner did not forget Hamerling's application a second time!

From the external point of view therefore Kaltenbrunner's negligence was responsible for the decisive turning point in Hamerling's life; otherwise Hamerling would have stagnated in Budapest. This is not intended as a ctiticism of Budapest; but the fact remains that Budapest would have been a spiritual desert for Hamerling and he would have been unable to develop his particular talents. And our biographer would now be able to tell us how it was that Hamerling had been transferred from Graz to Trieste—because Kaltenbrunner had simply overlooked Hamerling's application.

Now this is a striking incident and one could find countless others of its kind in life. And he who seeks to measure life by the yard-stick of external events will scarcely find causes, even if he believes that he is able to establish causal relationships, that are more closely connected with their effects than the negligence of the worthy Kaltenbrunner with the spiritual development of Robert Hamerling. I make this observation simply to call your attention to the fact that it is imperative to implant in the hearts of men this principle: that external life as it unfolds must be seen simply as a symptom that reveals its inner meaning.

In my last lecture I spoke of the forties to the seventies as the critical period for the bourgeoisie. I pointed out how the bourgeoisie had been asleep during these critical years and how the end of the seventies saw the beginning of those fateful decades which led to our present situation.T1i.e. the war of 1914–18 I spent the first years of these decades in Austria. Now as an Austrian living in the last third of the nineteenth century one was in a strange position if one wished to participate in the cultural life of the time. It is of course easy for me to throw light on this situation from the standpoint of a young man who spent his formative years in Austria and who was German by descent and racial affiliation. To be a German in Austria is totally different from being a German in the ReichT2i.e. Imperial Germany. or in Switzerland. One must, of course, endeavour to understand everything in life and one can understand everything; one can adapt oneself to everything. But if, for example, one were to raise the question: what does an Austro-German feel about the social structure in which he lives and is it possible for an Austro-German without first having adapted himself to it, to have any understanding of that peculiar civic consciousness one finds in Switzerland? Then the answer to this question must be an emphatic no! The Austro-German grew up in an environment that makes it totally impossible for him to understand—unless he forced himself to do so artificially—that inflexible civic consciousness peculiar to the Swiss.

But these national differentiations are seldom taken into account. We must however give heed to them if we are to understand the difficult problems in this domain which face us now and in the immediate future. It was significant that I spent my formative years in an environment where the most important things did not really concern me. I would not mention this if it were not in fact the most important experience of the true-born German-Austrian. In some it finds expression in one way, in others in another way. To some extent I lived as a typical Austrian. From the age of eleven to eighteen I had to cross twice a day the river Leitha which formed the frontier between Austria and Hungary since I lived at Neudörfl in Hungary and attended school in Wiener-Neustadt. It was an hour's journey on foot and a quarter of an hour's by slow train—there were no fast trains, nor are there any today I believe—and each time I had to cross the frontier. Thus one came to know the two faces of what is called abroad ‘Austria.’ Formerly things were not so easy in the Austrian half of the Empire. Today one cannot say things are easier (that is unlikely), but different. Up till now one had to distinguish two parts of the Austrian Empire. Officially one half was called, not Austria, but ‘the Kingdoms and “lands” represented in the Federal Council’, i.e. Cis-Leithania, which included Galicia, Bohemia, Silesia, Moravia, Upper and Lower Austria, Salzburg, the Tyrol, Styria, Carniola, Carinthia, Istria and Dalmatia. The other half, Trans-Leithania,3Realschule. Here emphasis an Mathematics and Natural Sciences. This type of school had a vocational bias. consisted of the ‘lands’ of the Crown of St. Stephen, i.e. what is called abroad Hungary, which included also Croatia and Slavonia. Then, after the eighties, there was the territory of Bosnia and Herzegovina, occupied up to 1909 and later annexed, which was jointly administered by the two halves of the Empire.

Now in the area where I lived, even amongst the most important centres of interest, I did not find anything which really interested me between the ages of eleven and eighteen. The first important landmark was Frohsdorf, a castle inhabited by Count de Chambord, a member of the Bourbon family, who had made an unsuccessful attempt in 1871 to ascend the throne of France under the name of Henry V. There were many other peculiarities attaching to him. He was an ardent supporter of clericalism. In him, and in everything associated with him, one could perceive a world in decline, one could catch the atmosphere of a world that was crumbling in ruins. There were many things one saw there, but they were of no interest. And one felt: here is something which was once considered to be of the greatest importance and which many today still regard as immensely important. But in reality it is a bagatelle and has no particular importance.

The second thing in the neighbourhood was a Jesuit monastery, a genuine Jesuit monastery. The monks were called Redemptorists,4Leitha and Leithania. The Leitha a tributory of the Danube. Up till 1918 formed the frontier between Austria and Hungary. The Austrian half of the Empire called Cis-Leithania, the Hungarian half, Trans-Leithania. See The Hapsburg Empire by C. S. Macartney, 1968. an offshoot of the Jesuits. This monastery was situated not far from Frohsdorf. One saw the monks perambulating, one learned of the aims and aspirations of the Jesuits, one heard various tales about them, but this too was of no interest. And again one felt: what has all this to do with the future evolution of mankind? One felt that these monks in their black cowls were totally unrelated to the real forces which are preparing man's future development.

The third thing in the locality where I lived was a masonic lodge. The local priest used to inveigh against it, but of course the lodge meant nothing to me for one was not permitted to enter. It is true the porter allowed me on one occasion to look inside, but in strict secrecy. On the following Sunday, however, I again heard the priest fulminating against the lodge. In Brief, this too was something that did not concern me.

I was therefore well prepared when I matured and became more aware to be influenced by things which formerly held no interest for me. I regard it as very significant and a fortunate dispensation of my karma that, whilst I had been deeply interested in the spiritual world in my early years, in fact I lived my early life on the spiritual plane, I had not been forced by external circumstances into the classical education of the Gymnasium. All that one acquires through a humanistic education I acquired later on my own initiative. At that time the standard of the Gymnasium education in Austria was not too bad; it has progressively deteriorated since the seventies and of recent years has come perilously close to the educational system of neighbouring states. But looking back today I am glad that I was not sent to the Gymnasium in Wiener-Neustadt. I was sent to the Realschule and thus came in touch with a teaching that prepared the ground for a modern way of thinking, a teaching that enabled me to be closely associated with a scientific outlook. I owed this association with scientific thinking to the fact that the best teachers—and they were few and far betweenin the Austrian Realschule, which was organized on the most modern lines, were those who were connected in some way with modern scientific thinking.

This was not always true of the school in Wiener-Neustadt. In the lower classes—in the Austrian Realschule religious instruction was given only in the four lower classes—we had a teacher of religion who was a very pleasant fellow, but was quite unfitted to bring us up as devout and pious Christians. He was a Catholic priest and that he was hardly fitted to inspire piety in us is shown by the fact that three young boys who used to call for him everyday after school were said to be his sons. But I still hold him in high regard for everything he taught in class apart from his religious instruction. He imparted this religious instruction in the following way: he called an a pupil to read a few pages from a devotional work; then it was set for homework. One did not understand a word, learned it by heart and received high marks, but of course one had not the slightest idea of the contents. His conversation outside the classroom was sometimes beautiful and stimulating and above all warm and friendly.

Now in such a school one passed through the hands of a succession of teachers of widely different calibre. All this is of symptomatic significance. We had two Carmelites as teachers, one was supposed to teach us French, the other English. The latter in particular scarcely knew a word of English; in fact he could not string together a complete sentence. In natural history we had a man who had not the faintest understanding of God and the world. But we had excellent teachers for mathematics, physics, chemistry and especially for projective geometry. And it was they who paved the way for this inner link with scientific thinking. It is to this scientific thinking that I owed the impulse which is fundamentally related to the future aims of mankind today.

When, after struggling through the Realschule one entered the University, one could not avoid—unless one was asleep—taking an interest in public affairs and the world around. Now the Austro-German—and this is important—arrives at a knowledge of the German make-up in a totally different way from the Reich German.5Redemptorists. St. Alfonso de Liguori (1696–1787) founded the Congregation of the Most Holy Redeemer. Members of this congregation called Redemptionists. Undertook missionary work in the dioceses around Naples, heard confession and supervised schools. St. Alfonso beatified 1816 and canonized 1839. One could have, for example, a superficial interest in Austrian state-affairs, but one could scarcely feel a real inner relationship to them if one were interested in the evolution of mankind. On the other hand, as in my own case, one could have recourse to the achievements of German culture at the end of the eighteenth and at the beginning of the nineteenth century and to what I should like to call Goetheanism. As an Austro-German one responds to this differently from the Reich German. One should not forget that once one has become inured to the natural scientific outlook through a modern education one outgrows a certain artificial milieu which has spread over the whole of Western Austria in recent time. One outgrows the clerical Catholicism to which the people of Western Austria only nominally adhere, an extremely pleasant people for the most part—I exclude myself of course. This clerical Catholicism has never touched their lives deeply.

In the form it has assumed in western Austria this clerical Catholicism is a product of the Counter-Reformation, of the ‘Hausmacht’ policy of the Hapsburgs. The ideas and impulses of Protestantism were fairly widespread in Austria, but the Thirty Years' War and the events connected with it enabled the Hapsburgs to initiate a counter Reformation and to impose upon the extremely gifted and intelligent Austro-German people that terrible obscurantism, which must be imposed when one diffuses Catholicism in the form which prevailed in Austria as a consequence of the Counter Reformation. Consequently men's relationship to religion and religious issues becomes extremely superficial. And happiest are those who are still aware of this superficial relationship. The others who believe that their faith, their piety is honest and sincere are unwittingly victims of a monstrous illusion, of a terrible lie which destroys the inner life of the soul.

With a Background of natural science it is impossible of course to come to terms with this frightful psychic mishmash which invades the soul. But there are always a few isolated individuals who develop themselves and stand apart from it. They find themselves driven towards the cultural life which reached its zenith in Central Europe at the end of the eighteenth and in the early nineteenth century. They came in touch with the current of thought which began with Lessing, was carried forward by Herder, Goethe and the German Romantics and which in its wider context can be called Goetheanism.

In these decades it was of decisive importance for the Austro-German with spiritual aspirations that—living outside the folk community to which Lessing, Goethe, Herder etcetera belonged, and transplanted into a wholly alien environment over the frontier—he imbibed there the spiritual perception of Goethe, Schiller, Lessing and Herder. Nothing else impressed one; one imbibed only the Weltanschauung of Weimar classicism—and in this respect one stood apart, isolated and alone. For again one was surrounded by those phenomena which did not concern one.

And so one was associated with something that one gradually felt to be second nature, something, however, that was uprooted from its native soil and which one cherished in one's inmost soul in a community which was interested only in superficialities. For it was anomalous to cherish Goethean ideas at a time when the world around was enthusiasticbut the words of enthusiasm were pompous and artificial, without any suggestion of sincere and honest endeavourabout such publications (and I could give other examples) as the book of the then Crown Prince Rudolf An illustrated history of Austria. The book in fact was the work of ghost writers. One had no affinity with this trash, though, it is true, one belonged outwardly to this world of superficiality. One treasured in one's soul that which was an expression of the Central European spirit and which in a wider context I should like to call Goetheanism.

This Goetheanism, with which I associate the names of Schiller, Lessing, Herder and also the German philosophers, occupies a singularly isolated position in the world. And this isolation is extremely significant for the whole evolution of modern mankind for it causes those who wish to embark upon a serious study of Goetheanism to become a little reflective.

Looking back over the past one asks oneself: what have Lessing, Goethe and the later German Romantics, approximately up to the middle of the nineteenth century, contributed to the world? In what respect is this contribution related to the historical evolution prior to Lessing's time? Now it is well known that the emergence of Protestantism out of Catholicism is intimately connected with the historical evolution of Central Europe. We see, an the one hand, in Central Europe, in Germany for example—I have already discussed the same phenomenon in relation to Austria—the survival of the universalist impulse of Roman Catholicism. In Austria its influence was more external, as I have described, in Germany more inward. Now there is a vast difference between the Austrian Catholic and the Bavarian Catholic, and many of these differences which have survived date back to the remote past. Then came the invasion of Catholic culture by Protestantism or Lutheranism, which in Switzerland took the form of Calvinism or Zwinglianism.6The ‘Reich German’ refers to the German who was born and lived in Imperial Germany pre-1918. Now a high proportion of the German people, especially the Reich Germans, was Lutheran. But strangely enough there is no connection whatsoever between Lutheranism and Goetheanism! It is true that Goethe had studied both Lutheranism and Catholicism, though somewhat superficially. But when one considers the ferment in Goethe's soul, one can only say that throughout his life it was a matter of indifference to him whether one professed Catholicism or Protestantism. Both confessions could be found in his entourage, but he was in no way connected with them. To this aperçu the following can be added. Herder7Gottfried Herder (1744–1807). A seminal influence in theology, history, philosophy and pedagogy. Friend of Goethe. See any history of German literature. was pastor and later General Superintendent in Weimar. As pastor, of course, he had received much from Luther externally and was familiar with his teachings; he was aware that his outlook and thinking had nothing in common with Lutheranism and that he had entirely outgrown the Lutheran faith. Thus, in everything associated with Goetheanism—and I include men such as Herder and others—we have in this respect a completely isolated phenomenon. When we enquire into the nature of this isolated phenomenon we find that Goetheanism is a crystallization of all kinds of impulses of the fifth post-Atlantean epoch. Luther did not have the slightest influence on Goethe; Goethe, however, was influenced by Linnaeus,8Karl von Linnä or Linnaeus (1707–78). Swedish naturalist. Inventor of artificial system of plant classification. Spinoza and Shakespeare, and on his own admission these three personalities exercised the greatest influence upon his spiritual development.

Thus Goetheanism stands out as an isolated phenomenon and that is why it can never become popular. For the old entrenched positions persist; not even the slightest attempt was made to promote the ideas of Lessing, Schiller, and Goethe amongst the broad masses of the population, let alone to encourage the feelings and sentiments of these personalities. Meanwhile an outmoded Catholicism on the one hand, and an outmoded Lutheranism on the other hand, lived on as relics from the past. And it is a significant phenomenon that, within the cultural stream to which Goethe belonged and which produced a Goethe, the spiritual activities of the people are influenced by the sermons preached by the Protestant pastors. Amongst the latter are a few who are receptive to modern culture, but that is of no help to them in their sermons. The spiritual nourishment offered by the church today is antediluvian and is totally unrelated to the demands of the time; it cannot lend in any way vitality or vigour. It is associated, however, with another aspect of our culture, that aspect which is responsible for the fact that the spiritual life of the majority of mankind is divorced from reality. Perhaps the most significant symptom of modern bourgeois philistinism is that its spiritual life is remote from reality, all its talk is empty and unreal.

Such phenomena, however, are usually ignored, but as symptoms they are deeply significant. You can read the literature of the war-mongers over recent decades and you will find that Kant is quoted again and again. In recent weeks many of these war-mongers have turned pacifist, since peace is now in the offing. But that is of no consequence; philistines they still remain, that is the point. The Stresemann9Gustav Stresemann (1878–1929). National liberal politician. Post-1918 advocated a policy of reconciliation. Chancellor 1923. Foreign Minister 1923–9. Nobel Peace Prize 1926. of today is the same Stresemann of six weeks ago. And today it is customary to quote Kant as the ideal of the pacifists. This is quite unreal. These people have no understanding of the source from which they claim to have derived their spiritual nourishment.

That is one of the most characteristic features of the present time and accounts for the strange fact that a powerful spiritual impulse, that of Goetheanism, has met with total incomprehension. In face of the present catastrophic events this thought fills us with dismay. When we ask: what will become of this wave—one of the most important in the fifth post-Atlantean epoch—given the atmosphere prevailing in the world today, we are filled with sadness.

In the light of this situation the decision to call the centre which wishes to devote its activities to the most important impulses of the fifth post-Atlantean epoch the ‘Goetheanum’ irrespective of the fate which may befall it, has a certain importance. That this building shall bear the name ‘Goetheanum’ for many years to come is of no consequence; what is important is that the thought even existed, the thought of using the name ‘Goetheanum’ in these most difficult times.

Precisely through the fact I have mentioned to you, Goetheanism in its isolation could become something of unique importance when one lived at the aforesaid time in Austria where one's interests were limited. For if people had understood that Goetheanism was something which concerned them, the present catastrophe would not have arisen. This and many other factors enabled isolated individuals in the German-speaking areas of Austria—the broad masses live under the heel of the Catholicism of the CounterReformation—to develop a deep inner relationship to Goetheanism. I made the acquaintance of one of these personalities, Karl Julius Schröer10K. J. Schröer (1825–1900). Literary historian. Professor of history of literature at the Technische Hochschule in Vienna. Teacher of R. Steiner. See latter's Course of my Life. who lived and worked in Austria. In every field in which he worked he was inspired by the Goethe impulse. History will one day record what men such as Karl Julius Schröer thought about the political needs of Austria in the second half of the nineteenth century. These people who never found a hearing were aware to some extent how the present situation could have been avoided, but that it was nevertheless inevitable because no one would listen to them.

On arriving in Imperial Germany one had above all the impression, when one had developed a close spiritual affinity with Goethe, that there was nowhere any understanding of this affinity. I came to Weimar in autumn 1889—I have already described the pleasing aspects of life in Weimar—but what I treasured in Goethe (I had already published my first important book on Goethe) met with little understanding or sympathy because it was the spiritual element in him that I valued. Outwardly and inwardly life in Weimar was wholly divorced from any connection with Goethean impulses. In fact these Goethean impulses were completely unknown in the widest circles, especially amongst professors of the history of literature who lectured on Goethe, Lessing and Herder in the universities—unknown amongst the philistines who perpetrated the most atrocious biographies of Goethe. I could only find consolation for these horrors by reading the publications of Schröer and the excellent book of Herman Grimm which I came across relatively early in my life. But Herman Grimm was never taken seriously by the universities. They regarded him as a dilettante, not as a serious scholar.

No genuine university scholar of course has ever made the effort to take K. J. Schröer seriously; he is always treated as a light-weight. I could give many examples of this. But one should not forget that the literary world with its many ramifications—including, if I may say so, journalism—has been under the influence of a bourgeoisie that has been declining in recent decades, a bourgeoisie which is fast asleep and which, when it embarks upon spiritual activities, has no understanding of their real meaning. Under these circumstances it is impossible of course to arrive at any understanding of Goetheanism. For Goethe himself is, in the best sense of the word, the most modern spirit of the fifth postAtlantean epoch.

Consider for a moment his unique characteristics. First, his whole Weltanschauung—which can be raised to a higher spiritual level than Goethe himself could achieve—rests upon a solid scientific foundation. At the present time a firmly established Weltanschauung cannot exist without a scientific basis. That is why there is a strong scientific substratum to the book with which I concluded my Goethe studies in 1897. (The book has now been republished for reasons similar to those which led to the re-issue of The Philosophy of Freedom.) The solid body of philistines said at that time (it was a time when my books were still reviewed, the title of the book is Goethe's Conception of the World:T3Anthroposophical Publishing Co., London and Anthroposophic Press, New York, 1928. in reality he ought to call it ‘Goethe's conception of nature.’ The so-called Goethe scholars, the literary historians, philosophers and the like failed to realize that it is impossible to present Goethe's Weltanschauung unless it is firmly anchored in his conception of nature.

A second characteristic which shows Goethe to be the most modern spirit of the fifth post-Atlantean age is the way in which that peculiar spiritual path unfolds within him which leads from the intuitive perception of nature to art. In studying Goethe it is most interesting to follow this connection between perception of nature and artistic activity, between artistic creation and artistic imagination. One touches upon thousands of questions—which are not dry, theoretical questions, but questions instinct with life, when one studies this strange and peculiar process which always takes place in Goethe when he observes nature as an artist, but sees it on that account no less in its reality, and when he works as an artist in such a way that, to quote his own words, one feels art to be something akin to the continuation of divine creation in nature at a higher level.

A third characteristic typical of Goethe's Weltanschauung is bis conception of man. He sees him as an integral part of the universe, as the crowning achievement of the entire universe. Goethe always strives to see him, not as an isolatcd being, but imbued with the wisdom that informs nature. For Goethe the soul of man is the stage on which the spirit of nature contemplates itself. But these thoughts which are expressed here in abstract form have countless implications if they are pursued concretely. And all this constitutes the solid base on which we can build that which leads to the supreme heights of spiritual super-sensible perception in the present age. If one points out today that mankind as a whole has failed to give serious attention to Goethe—and it has failed in this respect—has failed to develop any relation ship to Goetheanism, then it is certainly not in order to criticize, lecture or reproach mankind as a whole, but simply to invite them to undertake a serious study of Goetheanism. For to pursue the path of Goetheanism is to open the doors to an anthroposophically orientated spiritual science. And without Anthroposophy the world will not find a way out of the present catastrophic situation. In many ways the safest approach to spiritual science is to begin with the study of Goethe.

All this is related to something else. I have already pointed out that this shallow spiritual life which is preached from the pulpit and which then becomes for many a living lie of which they are unconscious—all this is outmoded. And fundamentally the erudition in all the faculties of our universities is equally outmoded. This erudition becomes an anomaly where Goetheanism exists alongside it. For a further characteristic feature of Goethe's personality is his phenomenal universality. It is true that in various domains Goethe has sowed only the first seeds, but these seeds can be cultivated everywhere and when cultivated contain the germ of something great and grandiose, the great modern impulse which mankind prefers to ignore, and compared with which modern university education in its outlook and attitude is antediluvian. Even though it accepts new discoveries, this modern university education is out of date. But at the same time there exists a true life of the spirit, Goetheanism, which is ignored. In a certain sense Goethe is the universitas litterarum, the hidden university, and in the sphere of the spiritual life it is the university education of today that usurps the throne. Everything that takes place in the external world and which has led to the present catastophe is, in the final analysis, the result of what is taught in our universities. People talk today of this or that in politics, of certain personalities, of the rise of socialism, of the good and bad aspects of art, of Bolshevism, etcetera; they are afraid of what may happen in the future, they envisage such and such occupying a certain post, and there are those who six weeks ago said the opposite of what they say today ... such is the state of affairs. Where does all this originate? Ultimately in the educational institutions of the present day. Everything else is of secondary importance if people fail to see that the axe must be laid to the tree of modern education. What is the use of developing endless so-called clever ideas, if people do not realize where in fact the break with the past must be made.

I have already spoken of certain things which did not concern me. I can now teil you of something else which did not concern me. When I left the Realschule for the university I entered my name for different lecture courses and attended various lectures. But they held no interest for me; one felt that they were quite out of touch with the impulse of our time. Without wishing to appear conceited I must confess that I had a certain sympathy for that universitas, Goetheanism, because Goethe also found that his university education held little interest for him. And at the royal university of Leipzig in the (then) Kingdom of Saxony, and again at Strasbourg university in later years, he took virtually no interest in the lectures he attended. And yet everything, even the quintessence of the artistic in Goethe rests upon the solid foundation of a rigorous observation of nature. In spite of all university education he gradually became familiar with the most modern impulses, even in the sphere of knowledge. When we speak of Goetheanism we must not lose sight of this. And this is what I should have liked to bring to men's attention in my Goethe studies and in my book Goethe's Conception of the World. I should have liked to make them aware of the real Goethe. But the time for this was not ripe; to a large extent the response was lacking. As I mentioned recently the first indications were visible in Weimar where the soil was to some extent favourable. But nothing fruitful came of it. Those who were already in entrenched positions barred the way to those who could have brought a new creative impulse. If the modern age were imbued in some small measure with Goetheanism, it would long for spiritual science, for Goetheanism prepares the ground for the reception of spiritual science. Then Goetheanism would again become a means whereby a real regeneration of mankind today could be achieved. One cannot afford to take a superficial view of our present age.

After my lecture in Basel yesterdayT4Rechtfertigung der übersinnlichen Erkenntnis durch die Naturwissenschaft, 31st October, 1918 (see Bibl. Nr. 72). I felt that no honest scientist could deny what I had to say on the subject of super-sensible knowledge if he were prepared to face the facts. There are no logical grounds for rejecting spiritual knowledge; the real cause for rejection is to be found in that barbarism which in all regions of the civilized world is responsible for the present catastrophe. It is profoundly symbolic that a few years ago a Goethe society had nothing better to do than to appoint as president a former finance minister—a typical example of men's remoteness from what they profess to honour. This finance minister who, as I said recently, bears, perhaps symptomatically, the Christian name ‘Kreuzwendedich’ believes of course, in his fond delusion, that he pays homage to Goethe. With a background of modern education he has no idea and can have no idea how far, how infinitely far removed he is from the most elementary understanding of Goetheanism.

The climate of the present epoch is unsuited to a deeper understanding of Goetheanism. For Goetheanism has no national affiliation, it is not something specifically German. It draws nourishment from Spinoza, from Shakespeare, from Linnaeus—none of whom is of German origin. Goethe himself admitted that these three personalities exercised a profound influence upon him—and in this he was not mistaken. (He who knows Goethe recognizes how justified this admission is.) Goetheanism could determine men's thinking, their religious life, every branch of science, the social forms of community life, the political life ... it could reign supreme everywhere. But the world today listens to windbags such as Eucken11R. Eucken (1846–1929). Professor of Philosophy, Jena. Pioneer of idealist metaphysics.

H. Bergson (1859–1941). Professor at Collge de France. Famous for his book Creative Evolution and the idea of the elan vital as the fundamental stuff of the Universe. or Bergson and the like ... (I say nothing of the political babblers, for in this realm today adjective and substantive are almost identical).

What we have striven for here—and which will arouse such intense hatred in the future that its realization is problematical, especially at the present time—is a living protest against the alienation of spiritual life today from reality. And this protest is best expressed by saying: what we wanted to realize here is a Goetheanum. When we speak here of a Goetheanum we bear witness to the most important characteristics and also to the most important demands of our time. And amid the philistine world of today this Goetheanum at least has been willed and should tower above this present world that claims to be civilized.

Of course, if the wishes of many contemporaries had been fulfilled, one could perhaps say that it would have been more sensible to speak of a Wilsonianum,12Thomas Woodrow Wilson (1856–1924). Professor of Jurisprudence and Political Economy. President U.S.A. 1912–20. Author of the ‘Fourteen Points’ as basis for peace 1918. Idea of a ‘League of Nations’ stemmed from him; also of a world government to prevent future wars. for that is the flag under which the present epoch sails. And it is to Wilsonism that the world at the present time is prepared to submit and probaly will submit.

Now it may seem strange to say that the sole remedy against Wilsonism is Goetheanism. Those who claim to know better come along and say: the man who talks like this is a utopian, a visionary. But who are these people who coin this phrase: he is an innocent abroad—who are they? Why, none other than those worldly men who are responsible for the present state of affairs, who always imagined themselves to be essentially ‘practical’ men. It is they of course who refuse to listen to words of profound truth, namely, that Wilsonism will bring sickness upon the world, and in all domains of life the world will be in need of a remedy and this remedy will be Goetheanism.

Permit me to conclude with a personal observation on the interpretation of my book Goethe's Conception of the World which has now appeared in a second edition. Through a strange concatenation of circumstances the book has not yet arrived; one is always ready to make allowances, especially at the present time. It was suggested by men of ‘practical’ experience some time ago, months ago in fact, that my books The Philosophy of Freedom and Goethe's Conception of the World should be forwarded here direct from the printers and so avoid going via Berlin and arrive here more quickly. One would have thought that those who proffered this advice were knowledgeable in these matters. I was informed that The Philosophy of Freedom had been despatched, but after weeks and weeks had not arrived. For some time people had been able to purchase copies in Berlin. None was to be had here because somewhere on the way the matter had been in the hands of the ‘practical’ people and we unpractical people were not supposed to interfere. What had happened? The parcel had been handed in by the ‘practical’ people of the firm who had been told to send it to Dornach near Basel. But the gentleman responsible for the despatch said to himself: Dornach near Basel; that is in Alsace, for there is a Dornach there which is also near Basel ... there is no need to pay foreign postage, German stamps will suffice. And so, on ‘practical’ instructions the parcel went to Dornach in Alsace where, of course, they had no idea what to do with it. The matter had to be taken up by the unpractical people here. Finally, after long delays when the ‘practical’ gentleman had satisfied himself that Dornach near Basel is not Dornach in Alsace, The Philosophy of Freedom arrived. Whether the other book, Goethe's Conception of the World, instead of being sent from Stuttgart to Dornach near Basel has been sent by some ‘practical’ person via the North Pole, to arrive finally in Dornach after travelling round the globe, I cannot say. In any case, this is only one example that we have experienced personally of the ‘practical’ man's contribution to the practical affairs of daily life.

This is what I was first able to undertake personally in a realm that lay close to my heart—more through external circumstances than through my own inclination—in order to be of service to the epoch. And when I consider what was the purpose of my various books, which are born of the impulse of the time, I believe that these books answer the demands of our epoch in widely divergent fields. They have taught me how powerful have been the forces in recent decades acting against the Spirit of the age. However much in their ruthlessness people may believe that they can achieve their aims by force, the fact remains that nothing in reality can be enforced which runs counter to the impulses of the time. Many things which are in keeping with the impulses of the time can be delayed; but if they are delayed they will later find scope for expression, perhaps under another name and in a totally different context. I believe that these two books, amongst other things, can show how, by observing one's age, one can be of service to it. One can serve one's age in every way, in the simplest and most humble activities. One must simply have the courage to take up Goetheanism which exists as a Universitas liberarum scientiarum alongside the antediluvian university that everyone admires today, the socialists of the extreme left most of all.

It might easily appear as if these remarks are motivated by personal animosity and therefore I always hesitate to express them. One is of course a target for the obvious accusation—‘Aha, this fellow abuses universities because he failed to become a university professor!’ ... One must put up with this facile criticism when it is necessary to show that those who advocate this or that from a political, scientific, political-economic or confessional point of view of some kind or other fail to put their finger an the real malady of our time. Only those point to the real malady who draw attention to the pernicious dogma of infallibility which, through the fatal concurrence of mankind has led to the surrender of everything to the present domination of science, to those centres of official science where the weeds grow abundantly, alongside a few healthy plants of course. I am not referring to a particular individual or particular university professor (any more than when I speak of states or nations I am referring to a particular state or nation)—they may be excellent people, that is not the point. The really important question is the nature of the system.

And how serious this situation is, is shown by the fact that the technical colleges which have begun to lose a little of their natural character now assume university airs and so have Bone rapidly downhill and become corrupted by idleness.

I want you to consider the criticisms I have made today as a kind of interlude in our anthroposophical discussions. But I think that the present epoch offers such a powerful challenge to our thoughts and sentiments in this direction that these enquiries must be undertaken by us especially because, unfortunately, they will not be undertaken elsewhere.

Our present age is still very far removed from Goetheanism, which certainly does not imply studying the life and works of Goethe alone. Our epoch sorely needs to turn to Goetheanism in all spheres of life. This may sound utopian and impractical, but it is the most practical answer at the present time. When the different spheres of life are founded an Goetheanism we shall achieve something totally different from the single achievement of the bourgeoisie today—rationalism. He who is grounded in Goetheanism will assuredly find his way to spiritual science. This is what one would like to inscribe in letters of fire in the souls of men today.

This has been my aim for decades. But much of what I have said from the depths of my heart and which was intended to be of service to the age has been received by my contemporaries as an edifying Sunday afternoon sermonfor in reality those who are happy in their cultural sleep ask nothing more. We must seek concretely to discover what the epoch demands, what is necessary for our age—this is what mankind so urgently needs today. And above all we must endeavour to gain insight into this, for today insight is all important. Amidst the vast confusion of our time, a confusion that will soon become worse confounded, it is futile to ask: what must the individual do? What he must do first and foremost is to strive for insight and understanding so that the infallibility in the domain that I referred to today is directed into the right channel.

My book Goethe's Conception of the World was written specially in order to show that in the sphere of knowledge there are two streams today: a decadent stream which everyone admires, and another stream which contains the most fertile seeds for the future, and which everyone avoids. In recent decades men have suffered many painful experiences—and often through their own fault. But they should realize that they have suffered most—and worse is still to follow—at the hands of their schoolmasters of whom they are so proud. It appears that mankind must needs pass through the experiences which they have to undergo at the hands of the world schoolmaster, for they have contrived in the end to set up a schoolmaster as world organizer. Those windbags who have persuaded the world with their academic twaddle are now joined by another who proposes to set the world to right with empty academic rhetoric.

I have no wish to be pessimistic. These words are spoken in order to awaken those impulses which will answer Wilsonism with Goetheanism. They are not inspired by any kind of national sentiment, for Goethe himself was certainly not a nationalist; his genius was universal. The world must be preserved from the havoc that would follow if Wilsonism were to replace Goetheanism!